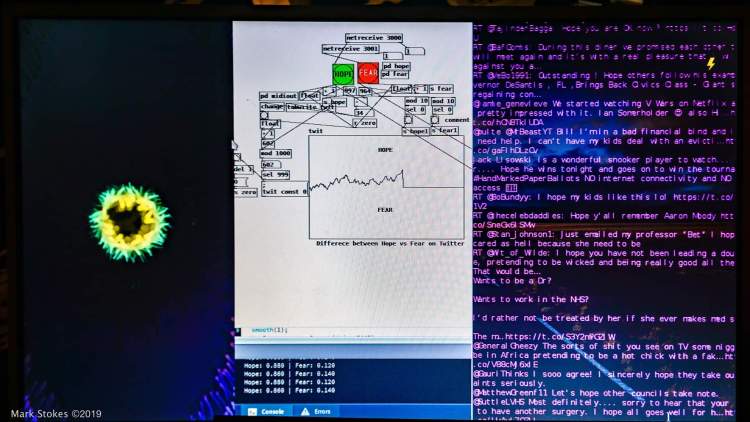

My newest iteration of twitter induced music is based on tracking Hope and Fear on a global scale.

I have a raspberry pi running a custom python script that searches for any mention of the words Hope or Fear in the entirety of twitter live. Whenever either of those words get mentioned, it will send a message to PureData and also print the tweet in the terminal. Puredata then tracks the instances of the mentions and graphs the difference between Hope or Fear. It also counts every 50 mentions, and if it is hopeful, it will play an ascending tone on 5 singing bowls, and if it is fearful it plays a descending tone. This is achieved by sending MIDI signals from puredata to a DADA machines Automat.

I also have some live visuals being generated in Processing created by the fantastic Jake Dubber. These visuals are also being controlled by the Hope vs Fear data. With Hope blooming from the centre and Fear spreading from the outer edges inwards.

This was first showed at the Tate Modern for their November 2019 Tate Late event with the Hackoustic community.

A variation of the installation creates its own sound file and envelope as time progresses, and can be listened to in this video:

You must be logged in to post a comment.